Back to Basics

Intro to Deep Learning : CNNs

Part 2 of 3

"Back to Basics"

- This is a three-part introductory series :

-

- Appropriate for Beginners!

- Code-along is helpful for everyone!

- Last week : MLPs (& fundamentals)

- This week : CNNs (for vision)

- Next week : Transformers (for text)

Event Extras

Plan of Action

- Each part has 2 segments :

-

- Talk = Mostly Martin

- Code-along = Mostly Sam

- Ask questions at any time!

Today's Talk

- (Housekeeping)

- Vision use-cases

- 2 new Layers!

- Representation Learning

- Concrete example(s) with code

-

- Fire up your browser for code-along

About Me

- Machine Intelligence / Startups / Finance

-

- Moved from NYC to Singapore in Sep-2013

- 2014 = 'fun' :

-

- Machine Learning, Deep Learning, NLP

- Robots, drones

- Since 2015 = 'serious' :: NLP + deep learning

-

- GDE ML; ML-Singapore co-organiser

- Red Dragon AI...

About Red Dragon AI

- Google Partner : Deep Learning Consulting & Prototyping

- SGInnovate/Govt : Education / Training

- Research : NeurIPS / EMNLP / NAACL

- Products :

-

- Conversational Computing

- Natural Voice Generation - multiple languages

- Knowledgebase interaction & reasoning

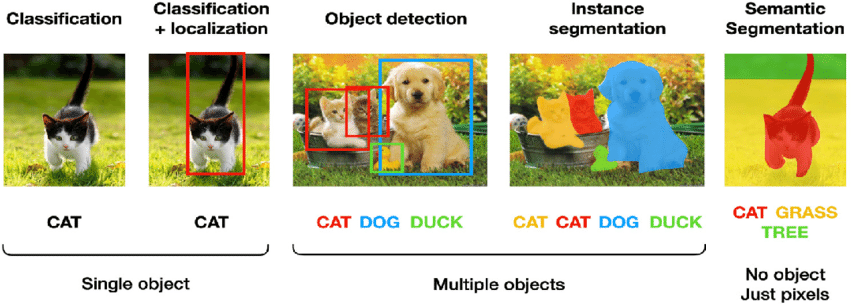

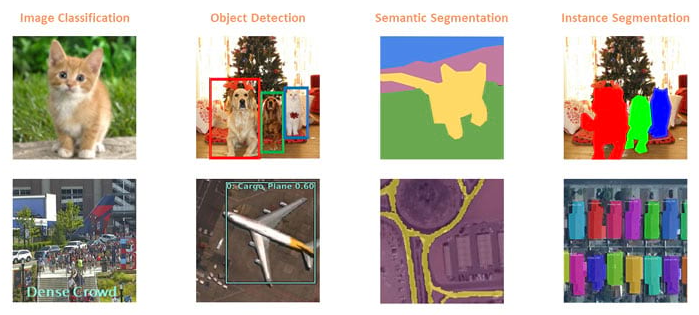

Vision Basics

Adapted from here

Vision Applications

From : Deep Learning meets GIS

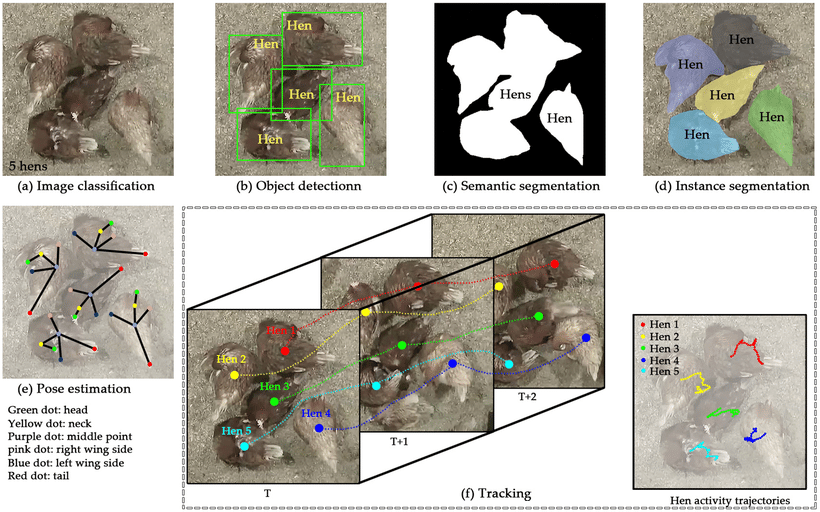

Example : Hens

Practices and Applications of Convolutional Neural Network-Based

Computer Vision Systems in Animal Farming: A Review

- Li et al (2021)

Vision Extras

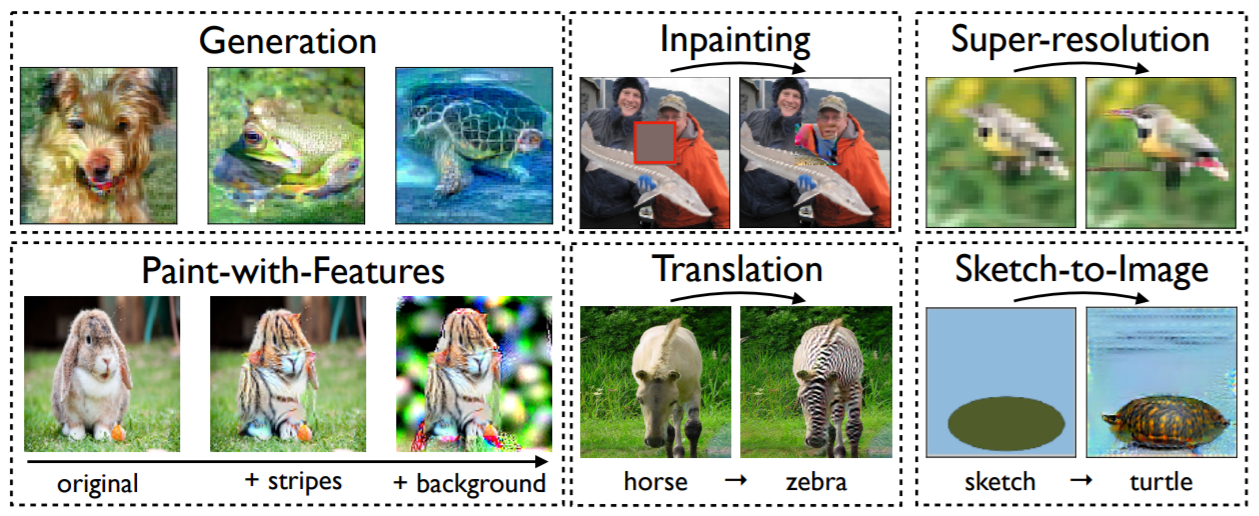

Image Synthesis with a Single (Robust) Classifier - Santurkar et al (2019)

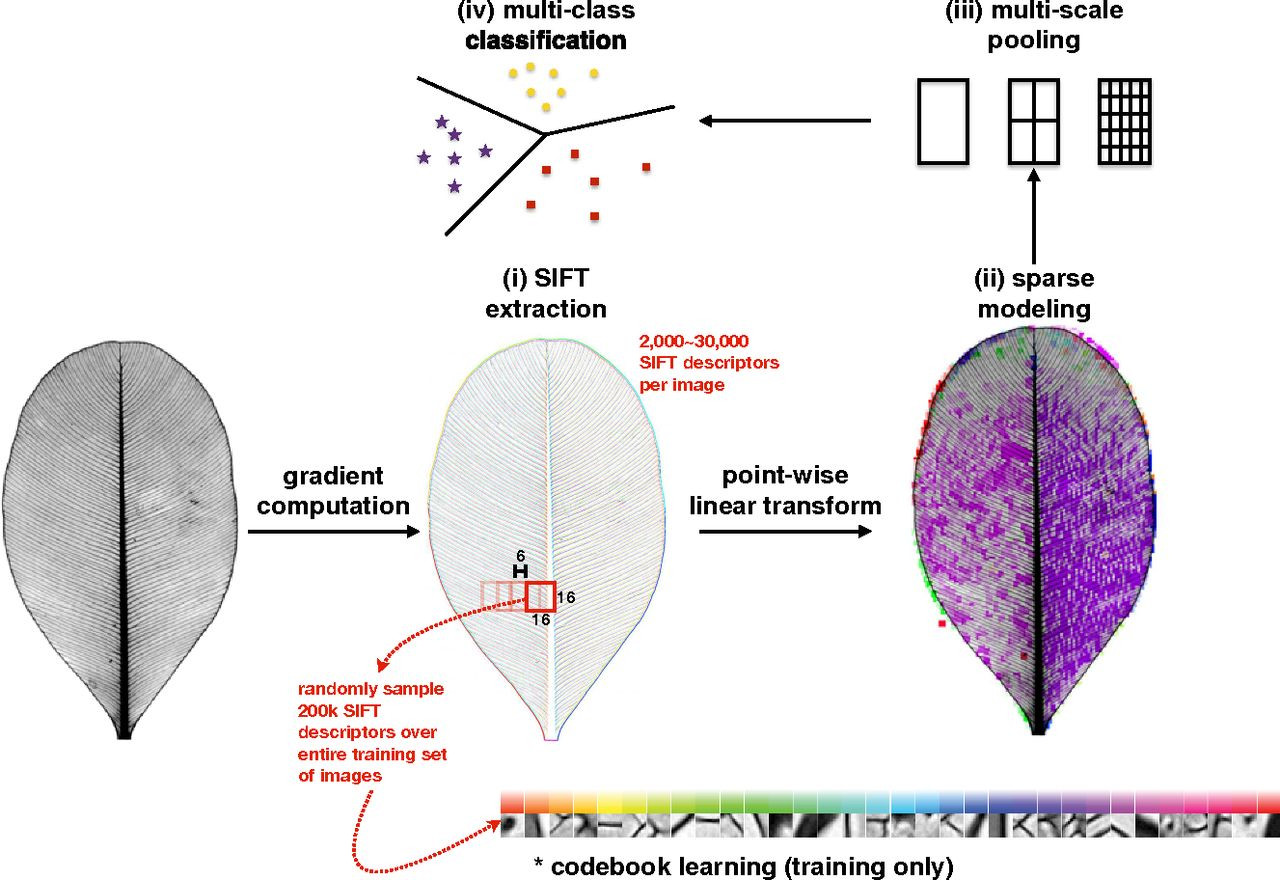

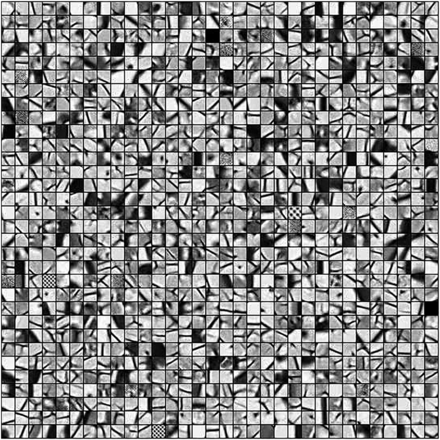

Pre-Deep Learning Vision

- Get a bunch of patches

- Match them to the image

- Use Match vs not-Match as features

- ... as input to a 'shallow' classifier

OpenCV-era plan

Computer vision cracks the leaf code - Wilf et al (2016)

OpenCV-era codebook

Computer vision cracks the leaf code - Wilf et al (2016)

Key take-aways

- Images have key elements:

-

- Textures; Lines; Shapes

- Translational invariance

- ...

- But building these elements by hand is too difficult

- Results ~ way below human performance

Deep Learning version

- Make the computer learn from data:

-

- Make the 'codebook' into ...

- ... trainable parameters

- Results ~ Better than Humans!

Deep Learning requirements

- Need a 'patch detector' Layer:

-

- Parameterised patches across whole input image

- Need a 'scaling' Layer:

-

- Allow several 'scales' within image

- ... so parts can relate to whole

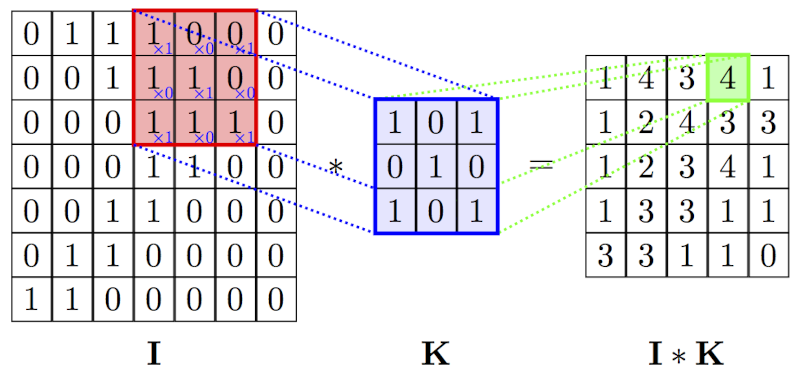

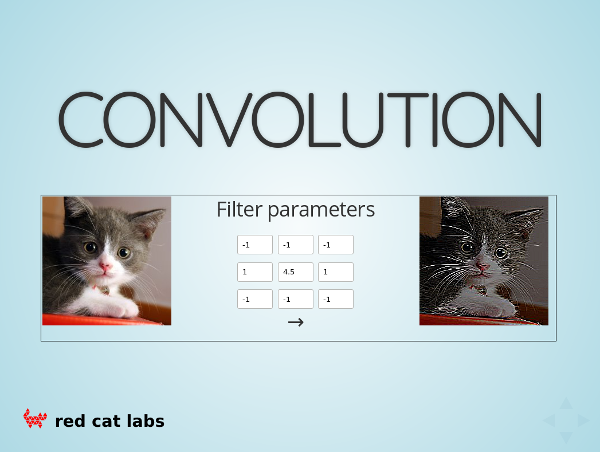

Convolutional Layer

- Key ideas:

-

- Have small 'patches' ...

- ... with trainable parameters

- ... and slide over whole image

- ... to make a new image

- Similar to other Layers : Combinable

CNN Operation

Play with a CNN Kernel

Conv2d Notes

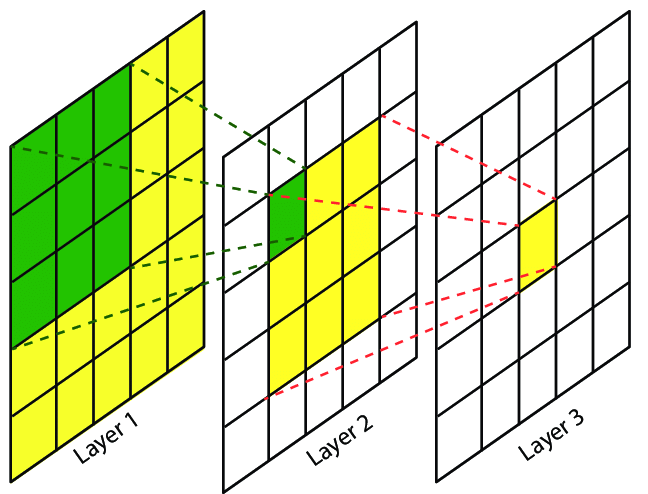

- Key ideas:

-

- Just a few parameters for whole image

- Patches are typically small

- ... but are stackable to increase 'area'

- Very suited to GPU speedup

- One layer can include many kernels (channels out)

CNN stacking

Maritime Semantic Labeling of Optical Remote Sensing Images

with Multi-Scale Fully Convolutional Network

- Lin et al (2017)

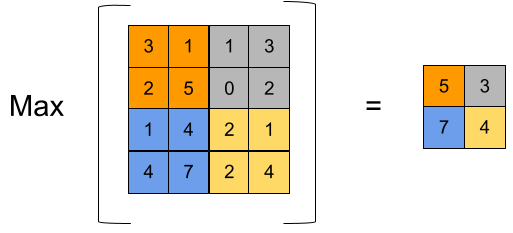

Scaling Layer

- Key ideas:

-

- Want to shrink image ...

- ... so that parts become 'nearer'

- ... and can then be put into a small

ConvLayer - ... to make a new image

- Similar to other Layers : Combinable

MaxPool Operation

MaxPool2d Notes

- Key ideas:

-

- Just shrinks the image

- ... but with some 'competition'

- Has no trainable parameters

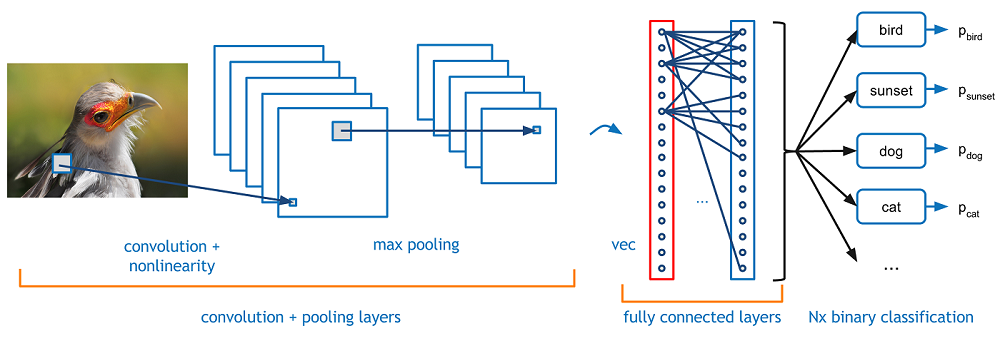

Deep Learning-era Plan

- Build the structure carefully

-

- CNN image layers

- and classifier at the end

- Learn all the parameters

-

- ... of the CNN and the classifier

- Train from end-to-end

Deep Learning plan

Deep Learning Model

- Input is an RGB Image (3 channels)

- Use

ConvandMaxPoolLayers - Then

Flattenthe output - Feed into an MLP classifier (

DenseLayers) - Output a

SoftMaxclassification - Train just like last week

Summary so far

- We're about to do hands-on Deep Learning!

- In the code-along you will:

-

- Grab a more difficult dataset

- Build a model using :

-

Conv2d,MaxPool2d,Flatten,DenseandSoftMax

- Measure the model error and fit the model to the data

- ... and do some model analysis

- We'll get to do some Transfer Learning next week...

- Code-Along! -

Extension... :

Representation Learning

- Let's think about what we've built:

-

- CNN Layers learn something ...

- ... that can be easily classified

- Comparing to the OpenCV version:

-

- The CNN layers learn about 'vision'

Denselayers learn about our classes

- IDEA : use the trained 'vision' part

- ... separately from the original task

Representations ⇒

Transfer Learning

- New Process :

-

- Find a difficult (generic) vision task

- ... and learn to solve it (hard)

- Then, take the trained network to pieces

- Use the pieces to build new models

- This allows us to re-use large datasets & models

- ... and get good results with small(er) datasets

Further Study

- Field is growing very rapidly

- Lots of different things can be done

- Easy to find novel methods / applications

Deep Learning Foundations

- 3 week-days + online content

- Play with real models & Pick-a-Project

- Funding, Certificates, etc

- 3 day course

Deep Learning for PMs

( = Foundations - code

+ management )

+ management

- Much more about 'big picture'

- Only a few code examples

- Project process standardised

- 3 day course

Vision (Advanced)

Advanced Computer Vision with Deep Learning

- Advanced classification

- Generative Models (Style transfer)

- Deconvolution (Super-resolution)

- U-Nets (Segmentation)

- Object Detection

- 2 day course

Sequences (Advanced)

Advanced NLP and Temporal Sequence Processing

- Named Entity Recognition

- Q&A systems

- seq2seq

- Neural Machine Translation

- Attention mechanisms

- Attention-is-all-You-Need

- 3 day course

Unsupervised methods

- Clustering & Anomaly detection

- Latent spaces & Contrastive Learning

- Autoencoders, VAEs, etc

- GANs (WGAN, Condition-GAN, CycleGAN)

- Reinforcement Learning

- 2 day course

Real World Apps

Building Real World A.I. Applications

- DIY : node-server + redis-queue + python-ml

- TensorFlow Serving

- TensorFlow Lite + CoreML

- Model distillation

- ++

- 3 day course

Deep Learning

MeetUp Group

- Next Regular Meeting ~ 21-December

-

- Hosted by Google

- Typical Contents :

-

- Talk for people starting out

- Something from the bleeding-edge

- Lightning Talks

- MeetUp.com / Machine-Learning-Singapore

Event Extras

- QUESTIONS -

Martin @ RedDragon . ai

Sam @ RedDragon . ai

My blog : http://mdda.net/

GitHub : mdda